Private company knowledge AI should start with control, not hype. Your documentation is not just a set of files. It contains your company knowledge: how work is actually done, how decisions are made, and how outcomes stay consistent.

That is exactly why AI adoption in this area has to start with one principle: keep your company knowledge private.

Before talking about models, prompts, or assistants, ask the foundation questions:

- Where is our knowledge stored?

- Who can access it?

- Is it isolated to our organization?

- Is it used to train external models?

- Can we control retention, deletion, and governance?

For most organizations, the risk is not using AI. The risk is moving too fast and losing control of internal knowledge in the process.

Larry is built to avoid that tradeoff.

Private Company Knowledge AI Without Losing Control

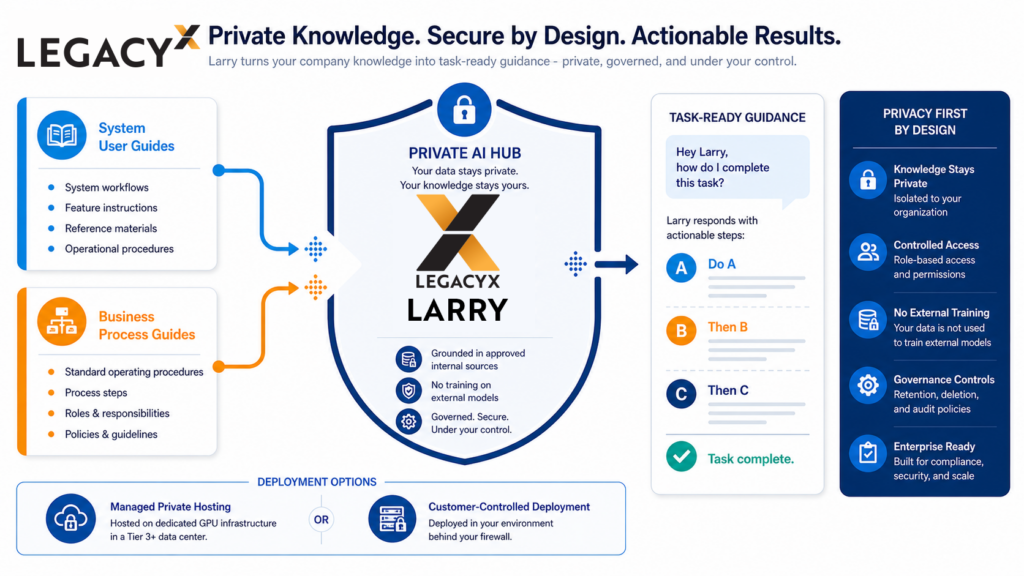

Larry, our private AI knowledge management platform helps teams use private company knowledge in practical ways by combining system user guides and business process guides into task-ready guidance. Instead of jumping between manuals and trying to stitch context together, a user can ask:

“Hey Larry, how do I complete this task?” and get a grounded, actionable response:

- Do A

- Then B

- Then C

Responses are all created based on approved internal sources.

That is the point: private knowledge, usable execution.

You get faster onboarding, fewer process mistakes, and less dependency on tribal knowledge, without defaulting to public AI workflows that blur your data boundaries.

Deployment matters here too. Larry supports two privacy-first models:

- Managed private hosting on LegacyX GPU infrastructure in a Tier 3+ data center

- Customer-controlled deployment on-prem in your own infrastructure

In both models, your company knowledge stays within defined boundaries and governance controls.

If your documentation includes process IP, customer context, internal operating playbooks, or specialized know-how, privacy is not a secondary concern. It is the starting point.

Larry is built to avoid the usual tradeoff between AI usefulness and data exposure.